MTG Deck Analyzer

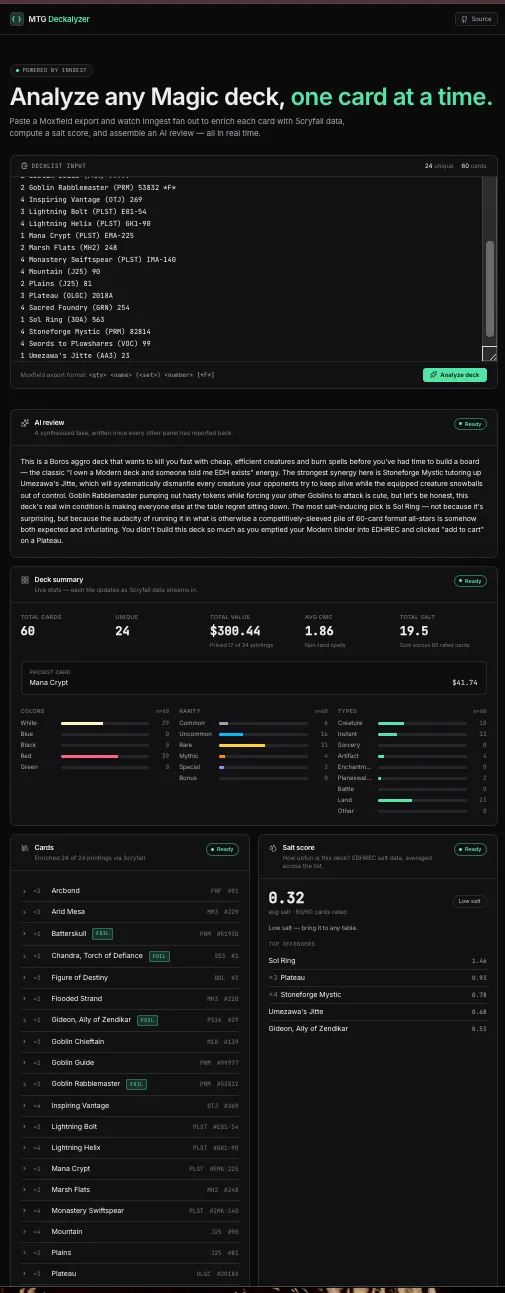

Paste a Moxfield decklist and watch card images, prices, salt scores, a WotC bracket classification, a composition report, and a Claude-written roast stream in as Inngest fans the work out.

I was fishing for a project — something concurrent, ideally in Go — and my friend Michael Roush pitched me his long-simmering Commander deck-building tool: feed it a decklist, get back EDHREC salt scores, a bracket classification, card replacement suggestions, the whole deal. I immediately heard “Inngest demo,” and you’re looking at the result.

What’s in it now: card images and prices from Scryfall, EDHREC salt scores, a WotC bracket classification built from four parallel signal checks (Game Changers, tutors, mass land denial, combos via Commander Spellbook), a ramp/draw/removal/board-wipe composition report scored against conventional deckbuilding targets, and a paragraph of Claude-written commentary at the end. Paste a Moxfield export, watch it all stream in, get roasted.

You can try it at mtg.thelinell.com.

Using Inngest

This is really an excuse to show off Inngest. The whole analysis is a single durable function with several parallel fan-outs that meet at a Promise.all:

- Scryfall enrichment runs in 8-card batches so each batch is its own

step.run— retries, caching, and visible concurrency all come for free. - EDHREC salt lookups fan out one step per unique card, in parallel with the Scryfall work.

- Four bracket signal checks (Game Changers, tutors, mass land denial, combos) run as four more parallel legs, each publishing to the realtime channel as it lands; a classifier step consumes all four once they resolve.

- A composition step pulls Scryfall oracle tags for ramp, draw, removal, and board wipes and scores the counts against conventional targets.

- A final

ai-reviewstep hands the enriched deck to Claude for a pithy paragraph.

Fan-out is just Promise.all over step.run. Here’s the salt leg in full:

const saltWork = Promise.all( uniqueNames.map((name) => step.run(`salt-${name}`, async () => { const salt = await fetchSaltScore(name); await inngest.realtime.publish(ch["card:salt"], { runId, name, salt }); return { name, salt }; }), ),);That’s the whole pattern — give each step a stable ID and Inngest runs them in parallel, retries failures in isolation, memoizes their results across replays, and surfaces every leg in the dashboard. A deck with 99 unique cards produces 99 independent steps with zero extra plumbing. It’s a silly example, but the ergonomics are the point: the same shape holds whether you’re hitting EDHREC 99 times or kicking off 10,000 background jobs.

The retries aren’t theoretical, either. Scryfall will happily 429 you on a bigger deck, and because each batch is its own step, Inngest just backs off and retries the offending batch — the rest of the fan-out keeps moving and I didn’t write a line of code to make that happen.

External calls degrade instead of fail, too. Warm cache hits get published before the Scryfall POST even fires, so an outage during a re-analysis still renders every card we’ve seen before — and on a fresh run, if Commander Spellbook or the Scryfall tagger is unreachable, the relevant panel shows “unknown” and the rest of the run still finishes.

One confession: the 8-card batch size and the 120ms publish stagger inside each batch are both smaller and slower than they strictly need to be. Scryfall’s collection endpoint happily takes 75 identifiers at a time, and nothing’s forcing the publishes apart — I tuned both down so the fan-out actually looks concurrent in the demo instead of landing as one indistinguishable flash. Demo affordances, not advice.

Typed realtime

The other reason Inngest earns its keep here is Realtime. Every intermediate result — each card, each salt score, each bracket signal — is published the instant its step resolves, so the UI fills in as the function runs. The channel declares every topic up front with a Zod schema, keyed to the browser tab:

export const deckChannel = channel({ name: (pageId: string) => `deck.${pageId}`, topics: { "card:salt": { schema: z.object({ runId: z.string(), name: z.string(), salt: z.number().nullable(), }), }, "bracket:signal": { schema: z.object({ runId: z.string(), signal: bracketSignalSchema, }), }, // card:ready, bracket:verdict, composition:ready, deck:review, run:done },});Publishing from inside a step is one line, and the payload is checked against the topic’s schema:

const ch = deckChannel(pageId);await inngest.realtime.publish(ch["card:salt"], { runId, name, salt });The client uses the same channel definition, so subscribe() narrows each message to its topic’s payload type — no hand-rolled router, no stringly-typed cases:

const sub = await subscribe({ channel: `deck.${pageId}`, topics: ["card:ready", "card:salt", "bracket:signal", /* ... */], onMessage: (message) => { switch (message.topic) { case "card:salt": { const { name, salt } = message.data; // typed updateSalt(name, salt); break; } // ... } },});No polling, no websocket plumbing. The channel is the contract, and a new topic costs about four lines on each side.

One more thing I’m pleased with: the analyze event’s ID is ${pageId}:${runId}, so when a client retries the POST — flaky network, impatient refresh, double-click — Inngest collapses the duplicates into a single run. No dedupe table, no idempotency-key header dance; the event ID is the dedupe key.

Stack

- TanStack Start (React 19, Vite, file-based routing) for the frontend and API routes

- Inngest for durable functions and realtime streaming

- Scryfall for card data and oracle tags, EDHREC for salt scores, Commander Spellbook for combo detection

- Claude for the deck review

- Tailwind, Biome, Vitest

- Cloudflare Workers via Wrangler for deploys

I’m biased, obviously, but TypeScript + Inngest + Cloudflare was a pleasure for this — the parts I wanted to stay boring stayed boring.